Almost a century ago, H.G. Wells predicted that “It is quite possible that in contact with western science, and inspired by the spirit of history, the original teaching of Gautama [The Buddha], revived and purified, may yet play a large part in the direction of human destiny.”

Today, we’re seeing this cross-fertilization between science and mindfulness practice becoming a reality. It seems like you can’t scroll through a Facebook feed without coming across an article describing the latest scientific study about meditators reporting less stress, exhibiting higher pain tolerance, and behaving more compassionately. Through fMRI brain scanning technology and behavioral studies, science has managed to provide objective evidence that meditation does indeed work. Thanks to science, what once was dismissed as something only hippies and airy-fairy new agers did has now moved into the mainstream.

This is just the beginning. As I hope to illustrate in the examples below, science and technology will do more than provide us with empirical data suggesting that meditation has value; it will revolutionize meditation practice itself. It will provide us with the tools to help this ancient discipline become many times more effective than it has ever been.

Innovation #1: Mind Reading Technology

While “mind reading technology” sounds like science fiction, the tools that enable us to read brainwaves and record them have been around for more than 100 years. Admittedly, brainwave reading technology or Electroencephalography (EEG) was quite primitive at its inception; likening it to mind reading would have been like comparing stargazing to space exploration. Today, however, the technology has evolved to the point where someone wearing a relatively unobtrusive headset can manipulate objects on a screen using mind commands alone.

The Emotiv Headset

To me, the most exciting application of this technology is one that will help beginning meditation students improve their concentration, their ability to focus on one thing at a time without getting distracted. As concentration becomes stronger, meditators begin to notice the subtlest of sensations, like tiny biochemical reactions on the skin, or the very movements of one’s inner organs. Meditators use concentration to develop insights about the nature of mind and body, just scientists use electron microscopes or particle accelerators to understand the nature of the physical world.

A common meditation technique for students to develop concentration is to focus on the breath. A student will follow the sensations of the in-breath and the out-breath and will seek to stay focused on these sensations without getting lost in thought. This isn’t easy, and beginners often get lost in a stream of thoughts within the first few breaths. When this happens, the student is instructed to gently bring their attention back to the breath once they’ve realized their mind has wandered.

This is a frustrating process since many students won’t realize that they’ve been lost in thought until 5-10 minutes after the fact. To help shorten this period, Meditation teachers will speak up after 10 or 20 minute intervals of silence to remind students to go back to the breath if their attention has wandered. While this is better than nothing, wouldn’t it be nice if the teacher could tell a student to return their focus the very moment their mind wanders?

This is precisely what mind-reading technology can help us do. The soon-to-be-released app, BrainBot, for example, uses an EEG headset that connects to an iPhone through Bluetooth. During the course of a meditation session, the headset will monitor brain activity to determine whether or not you’ve lost focus. Once the Brainbot app detects that your mind has started chasing errant thoughts, your phone will tell you to refocus your attention. (Check out the TEDx talk from one of BrainBot’s founders.)

Giving meditators a nudge whenever they need to refocus, however, is just the beginning when it comes to the potential of mind reading technology—especially when it’s teamed up with…

Innovation #2: Artificial Intelligence (AI)

Broadly speaking, there are three levels of intelligence where software is concerned. The first, is the software that forms the majority of code written since people started writing code. It mindlessly does exactly only what it’s programmed to do and nothing more. A good example of this is your run-of-the-mill pocket calculator.

Second, there is “narrow” artificial intelligence. This is software built upon very complex algorithms meant to perform a specific task very well. An often cited example of narrow AI is that of Deep Blue, the computer that defeated the chess world champion Garry Kasparov in 1997. While Deep Blue can simulate millions of possible chess moves per second, it can really only understand the rules of chess, and is (without significant modifications made by humans) completely useless outside the 64 square universe of a chessboard.

Finally, there is “general” artificial intelligence (also called universal AI). A general AI system can take in external inputs from the outside world and determine its own goals and objectives based on the situation at hand. A general artificial intelligence could figure out the rules of chess by studying videos of chess matches instead of being fed the rules through lines of code. Although we are a ways off from computers learning chess, we do have software that can play tic-tac-toe and Pac-Man, and can solve the Tower of Hanoi problem without being pre-programmed to do so. As we’ll see in the examples below, all three types of software (non-intelligent software, narrow AI, and general AI) have the potential to provide great benefit to meditation students.

Non-Intelligent Meditation Software

One doesn’t need to utilize AI technology in order to create incredibly powerful tools to aid a meditator in their practice. Oftentimes these solutions have algorithms that are much less complex than those in your average video game.

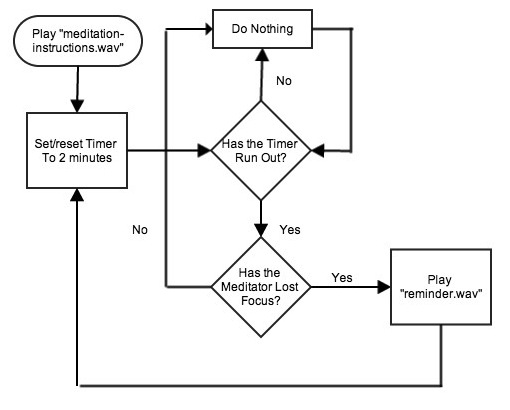

Take an app like the BrainBot example mentioned above. Here’s a very abstracted representation of the algorithm governing the function of the application:

Let’s go through this algorithm step by step:

Let’s go through this algorithm step by step:

- First, the application starts and plays an audio recording encoded in a .wav file with some basic instructions for meditation.

- Once the instructions are complete, a timer starts and gives the meditator 2 minutes to focus their mind.

- When the timer runs out, the app then uses the information it receives from the brainwave scanning device to determine whether or not the meditator has lost focus.

- When both the timer reads zero and the meditator’s brainwaves match what the app has predetermined as an “unfocused” mind, the reminder plays and the whole thing starts over again.

Of course, this flowchart masks the complexity involved in processing the reams of data that the brainwave scanning device sends to the app. I imagine that thousdands of lines of code would be required to determine just what “losing focus” would mean to a machine. Human beings would have to measure the brainwaves of enough meditators to determine just what ranges of frequency and amplitude of alpha and beta (and delta and theta and gamma) waves would constitute a focused or unfocused mind. These parameters would have to be painstakingly measured, recorded, and spoon-fed into the app before it could even begin to answer the question: “Has the meditator lost focus?” So, although the idea behind the app is really quite brilliant, the app itself is not all that intelligent.

Narrow AI and the Virtual Meditation Teacher

Facial recognition systems in security cameras, self-driving cars, and programs that can “read” scanned documents and convert them into encoded text (often referred to as optical character recognition) are just a few examples of how narrow AI systems are making their way into our daily lives. If enough effort was applied in this direction, we wouldn’t be too far off from adding “providing meditation instruction” to the list.

The first time I was introduced to the idea of a virtual meditation teacher was in a Buddhist Geeks Interview with the meditation instructor and science scholar Shinzen Young. In the interview, Young mentions a project he has in the works, called “Virtual Shinzen,” whereby an automated program would periodically ask a student certain questions about his or her mental state and then prescribe a meditation technique appropriate to that state of mind in accordance with a “meditation algorithm.”

Narrow AI would take this concept one step further by helping to process the subtle ebb and flow of mind states just as Deep Blue would process the millions of potential moves on a chessboard. In order to develop something like this, students wearing EEG scanning devices would provide the raw data of their brainwave activity from a meditation session and then describe their experience to a human teacher. The teacher would then provide the appropriate advice for each shift in mind state. The narrow AI system, after getting enough data from students and instructions from teachers would develop certain heuristic guidelines, “rules of thumb” about the appropriate meditation advice to give a student depending on the brainwave readings the EEG scanners pick up. The more data the virtual meditation teacher collects from students, the better those heuristics will become.

Initially, meditation students taking instructions from virtual teachers will probably need to check in with human teachers at least once a month (probably once a day, for users of Alpha versions of this program). The students will describe their experience to the human teachers and the teachers will then examine the advice given by the virtual teachers for quality assurance. If the virtual teacher gives inappropriate advice (which will happen), the human teacher will provide a correction and the AI system will take that into account for future teaching sessions. Over time, these virtual AI systems could become complex enough that the teachings they provide could become virtually (pun intended) indistinguishable from those of the great masters. In fact, their teachings could even be better because the AI teachers would have the ability to monitor mind states in real time.

General AI and the “Cyberguru”

Depending on whom you ask, we’re about 50 to 100 years away from creating AI that would match (and then quickly surpass) human intelligence. This would have mind-boggling consequences for us as a species, our very extinction being one possible outcome. If we do manage to create an artificial intelligence that doesn’t kill us, however, it will most likely lead to a quantum leap in our understanding of the inner workings of our own minds, as well as meditation and mindfulness practices.

Meditation techniques are, boiled down to their essence, no more than algorithms—a set of instructions, rules and triggers that change based on certain conditions. A computer working through an algorithm cycles through a series of conditions and then performs actions based on those conditions. The benefit of narrow AI is that it can potentially digest existing teachings and techniques from the meditation masters, and then provide instruction comparable to those of the masters themselves—perhaps even better instruction because of the mind reading capabilities they would conceivably have.

General AI would take meditation one step further by formulating new solutions and meditation techniques from scratch. It would take the guidelines from the existing masters and then could gobble up massive amounts of data that meditators provide through use of EEG headsets. This would then help refine meditation techniques much faster than the slow evolution they’ve had over thousands of years.

In order to develop general AI, we must either write extremely complicated software which can exhibit intelligence, or we must create what is called Full Brain Emulation (FBE), whereby we simulate the workings of the human brain through electronics. At this point it seems like a toss-up which type of AI we’ll create first, but when it comes to mindfulness, FBE seems to have the greater potential. The development of FBE will be in large part due to…

Innovation #3 Neuroinformatics

In 2005 a group of scientists in Switzerland founded the Blue Brain Project with the goal of creating a computerized model of the human brain by 2023. The scope of the work is ambitious. These scientists aim to create 3D computerized models of billions of neurons and trillions of synapses which would then emulate the behavior of neurons and synapses observed in the brain’s neocortex (the “thinking” layer of the brain). Obviously this would take a lot of data and processing power. Just to give you an idea, one simulated second of what amounted to “half a mouse brain” (about 8 million neurons) took ten seconds of computing time on one of the fastest supercomputers in the world.

By creating a reliable computerized model of the human brain, we could answer a question that none of the meditation masters of the past could have answered: “What happens in the brain when we meditate?” Should Moore’s law continue to hold up and the processing power of computers continue to increase exponentially, we could simulate what amounts to 15 years of mindfulness meditation in a brain emulator using less than a year’s computing time. Hyperintelligent AI computers (even narrow AI computers) could then use their enhanced capability to recognize patterns within complex systems to determine just what conditions need to be present in the brain in order for its owner to experience that which the meditation masters call enlightenment: the complete cessation of suffering and a dissolution of the sense of self.

If we had a neuron-by-neuron map of the enlightened brain, we could then be able to find shortcuts that could help meditation practitioners achieve mastery much faster than anyone has been able to do in the 2,500+ years of this tradition. We could, essentially, “hack” meditation by having an objective, data-driven understanding of what meditation techniques are effective and what meditation techniques aren’t. From this understanding we could create new techniques and perhaps even create new meditation tools and software—a “BrainBot 5.o” if you will. We could perhaps even learn how to shut off the very neurons in the brain that work to produce the sense of self, thereby creating a technologically induced moment of Satori. I’m sure the possibilities don’t end there.

The Revolution has Already Begun

The technologies mentioned in this article are more than just science fiction. Mind reading EEG technology and narrow AI are very real and have many useful applications even today. Perhaps the most far-fetched of the three is the creation of a computerized brain model, as there are many who are still skeptical about the project, especially in light of recent news that the brain is much more complex than we had originally thought it was. What is evident to me, however, is that we are starting to see the most fascinating applications of recent technological advances to help us understand how to make mindfulness practice more effective.

What other advances do you envision that might revolutionize meditation practice? Please leave your thoughts in the comments below.

Further Reference:

Hawkins, Jeff (Jun 23, 2008). “Jeff Hawkins on Artificial Intelligence.”

URL: http://www.youtube.com/watch?v=oozFn2d45tg

Markram, Henry (2008). “Henry Markram: The Blue Brain Project”

URL: http://www.youtube.com/watch?v=8iDR8Z-e_GU

Markram, Henry (Jul 29, 2009). “A Brain in a Supercomputer.”

URL: http://www.ted.com/talks/henry_markram_supercomputing_the_brain_s_secrets.html

Modha, Dharmendra (Feb 17, 2012). “Dharmendra Modha of IBM on Whole Brain Emulation.”

URL: http://www.youtube.com/watch?v=tqeINGOzIZo

Muelhauser, Luke (2012). “Intelligence Explosion: Evidence and Import”

URL: http://intelligence.org/files/IE-EI.pdf

Sandberg, Anders (Jun 1, 2010). “Whole Brain Emulation: The Logical Endpoint of Neuroinformatics?” URL: http://www.youtube.com/watch?v=kRB6Qzx9oXs

Young, Shinzen (Apr 18th, 2012). “Shinzen’s Blog: How to Enlighten the World.”

URL: http://shinzenyoung.blogspot.com/2012/04/how-to-enlighten-world.html

Young, Shinzen (Apr, 25th, 2012). “Toward a Science of Enlightenment.”

URL: http://www.youtube.com/watch?v=OZuxZ3BYvNM

Warren, Jeff (Jan, 2013). “How Understanding the Process of Enlightenment Could Change Science.” URL: http://www.psychologytomorrowmagazine.com/inscapes-enlightenment-and-science/